Planning for Scalability and Performance

Scale up your FME Server to increase job throughput and optimize job performance.

To increase the ability of FME Server to run jobs simultaneously, consider any of these approaches:

You can scale FME Server to support a higher volume of jobs by adding FME Engines on the same machine as the FME Server Core. A single active Core is all you need to scale processing capacity. The FME Server Core contains a Software Load Balancer that distributes jobs to the FME Engines. Each FME Engine can process one job at any one time, so if you have ten engines, you can run ten jobs simultaneously. If you have many simultaneous job requests, with jobs consistently in the queue, consider adding engines to your Core machine.

Note: Adding engines to the same machine does not reduce the time a single translation takes to run. This time is dependent on the underlying hardware and the design of the workspace. Complex workspaces, big data manipulation, and large datasets take more time to run.

Having multiple engines on the same machine also helps with Job Recovery.

If existing FME Engines are utilizing all system resources to process jobs, you can add FME Engines on a separate machine. This allows you to use the system resources of multiple machines, which allows additional concurrent jobs to be run.

A fault tolerant architecture provides for multiple, stand-alone FME Server installations. In addition to providing fault tolerance, this configuration distributes jobs between FME Servers via a third-party load balancer.

Adding FME Engines on a Separate Machine provides flexibility for running jobs in close physical proximity to the data they read and write. This approach can be used within a network, or across networks that are geographically distributed.

Note: Distributing FME Engines across networks that are geographically distributed requires that the network connecting FME components is high-speed and reliable. Specifically, the FME Engines read data and configuration files from, and write log files to, the FME Server System Share location. The network cannot be occasionally connected; it must always be connected.

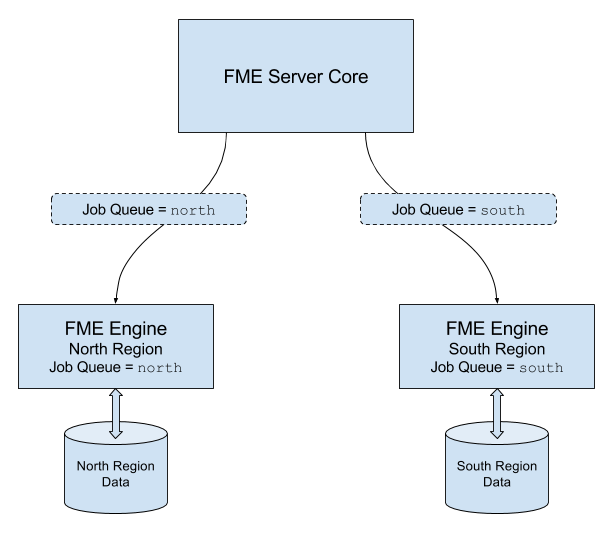

To ensure each job is run by the intended engine, you must use this approach in combination with Queue Control.

For example, consider a network with two data sources - one located in a northern region, and another located in a southern region. To run jobs efficiently, it makes sense to locate FME Engines in both regions. Jobs that are run on queue north access data in the northern data store. These jobs are routed to FME Engines located in the northern region. Likewise, jobs that are run on queue south access data in the southern data store. These jobs are routed to FME Engines located in the southern region.

To exercise a finer level of control over how jobs are processed, consider the following approaches:

Queue Control manages or spreads the work load of engines running workspaces. In a distributed environment, you may wish to run small jobs on certain engines, and larger jobs on other engines.

Or, you may have a mix of OS platforms on which certain FME formats can and cannot be run. For instance, consider an FME Server on a Linux OS. Linux cannot run some formats that may be required by your business. So, it may be necessary to have a Windows OS configured with an additional FME Server Engine.

Queues are also used when Adding FME Engines on a Separate Machine, to route jobs to engines that are located in close physical proximity to the data they read and write.

You can set engines to process certain jobs based on the queue of the transformation request.

FME Server allows you to set job priority using the Priority directive of a queue. Jobs in higher-priority queues may execute before jobs in lower-priority queues.